With open(STOPWORDS_FILE_PATH, 'w') as fh:

Python download from url install#

For this, install the package wordcloud and update the file like this: from os import path This can be a quick way to get an idea about what a text is about. Let's say I want to generate a word cloud for each article. Step 3: Format the source for further processing With that, there's one last thing missing.

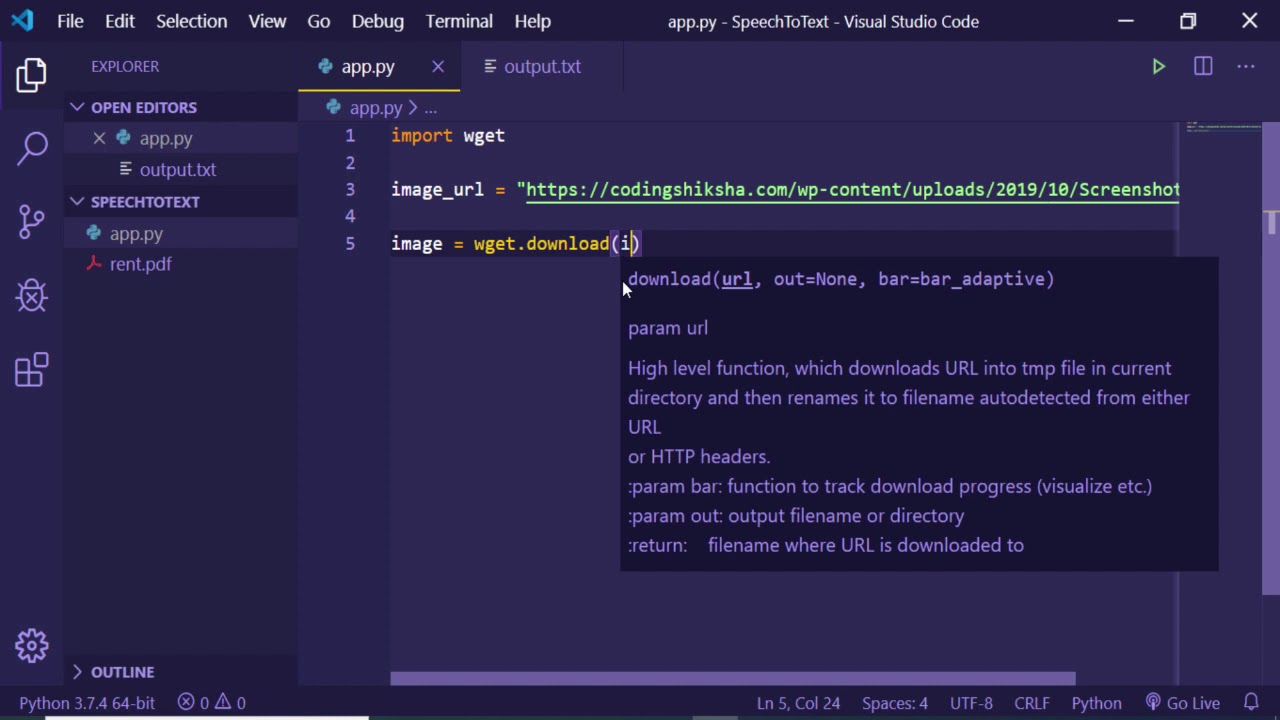

Python download from url code#

Since I have a second step now, I'm going to refactor the code a bit by putting it into functions and add a minimal CLI. Using BeautifulSoup I can see that a combination of find and get_text will do what I want. The element wrapping it has an id of container. In my case, I figured I want the text of the law without any markup. Inspecting it will show me the HTML structure. Therefore I go to one of the pages I downloaded, open it in a web browser, and hit Ctrl-U to view its source. Now that I've downloaded the files, it's time to extract their interesting features. Try to be a good web citizen, okay? Step 2: Parse the source Print('Written to', file_path) scraper.pyīy downloading the files, I can process them locally as much as I want without being dependent on a server. To keep things simple, I'll download files into the same directory next to the store and use their name as the filename.

In a real scenario, this would be too expensive and you'd use a database instead. Next, I write a bit of Python code in a file called scraper.py to download the HTML of this files. So it should be fine.) Step 1: Download the sourceįirst things first: I create a file urls.txt holding all the URLs I want to download: They offer an XML version for machine processing, but this page serves as an example of processing HTML. (Don't worry, I checked their Terms of Service.

You can find the code for this project in this git repository on GitHub.įor this example, we are going to scrape the Basic Law for the Federal Republic of Germany.

Python download from url how to#

What you will learn in this articleĪt the end of this article, you will know how to download a webpage, parse it for interesting information, and format it in a usable format for further processing. With some tweaks you could make it run on a server as well. We'll run the code on your local machine to explore some websites. You should also know how to set up a virtual environment. It will assume that you are already familiar with the Python programming language.Īt the very minimum you should understand list comprehension, context manager, and functions. Massive scraping can put a server under a lot of stress which can result in a denial of service. You should also check to see whether you could use an API instead. Web scraping is the process of extracting data from websites.īefore attempting to scrape a website, you should make sure that the provider allows it in their terms of service.